In this feature, we describe the latest happenings and recent patent applications around autonomous vehicles.

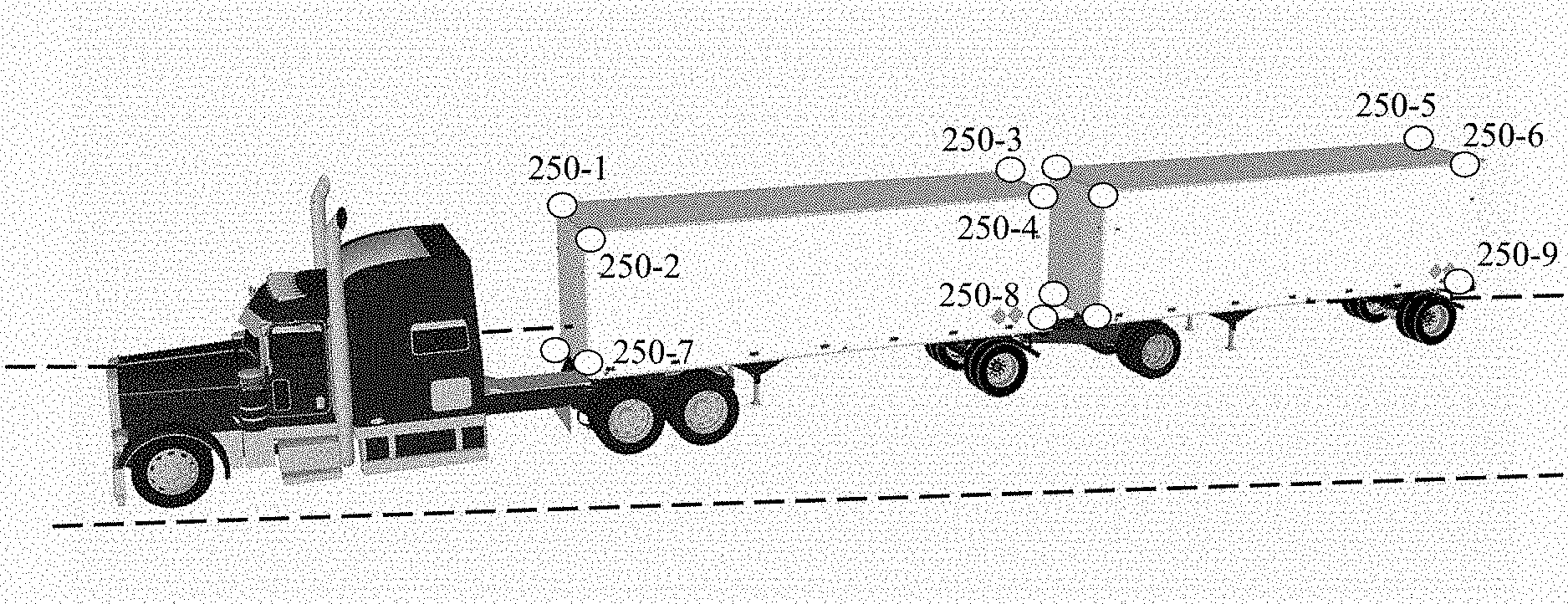

Amazon Eyes Its Stake in the AI Self-Driving Truck Startup, Plus

Amazon is hoping that driverless autonomous trucks will someday power its vast delivery network. The company has placed an order for 1,000 autonomous driving systems from Plus, a startup founded in 2016 to disrupt the long-haul trucking industry with self-driving technology. This deal will give Amazon the right to purchase up to 20 percent of the company itself. Read more about the announcement here.

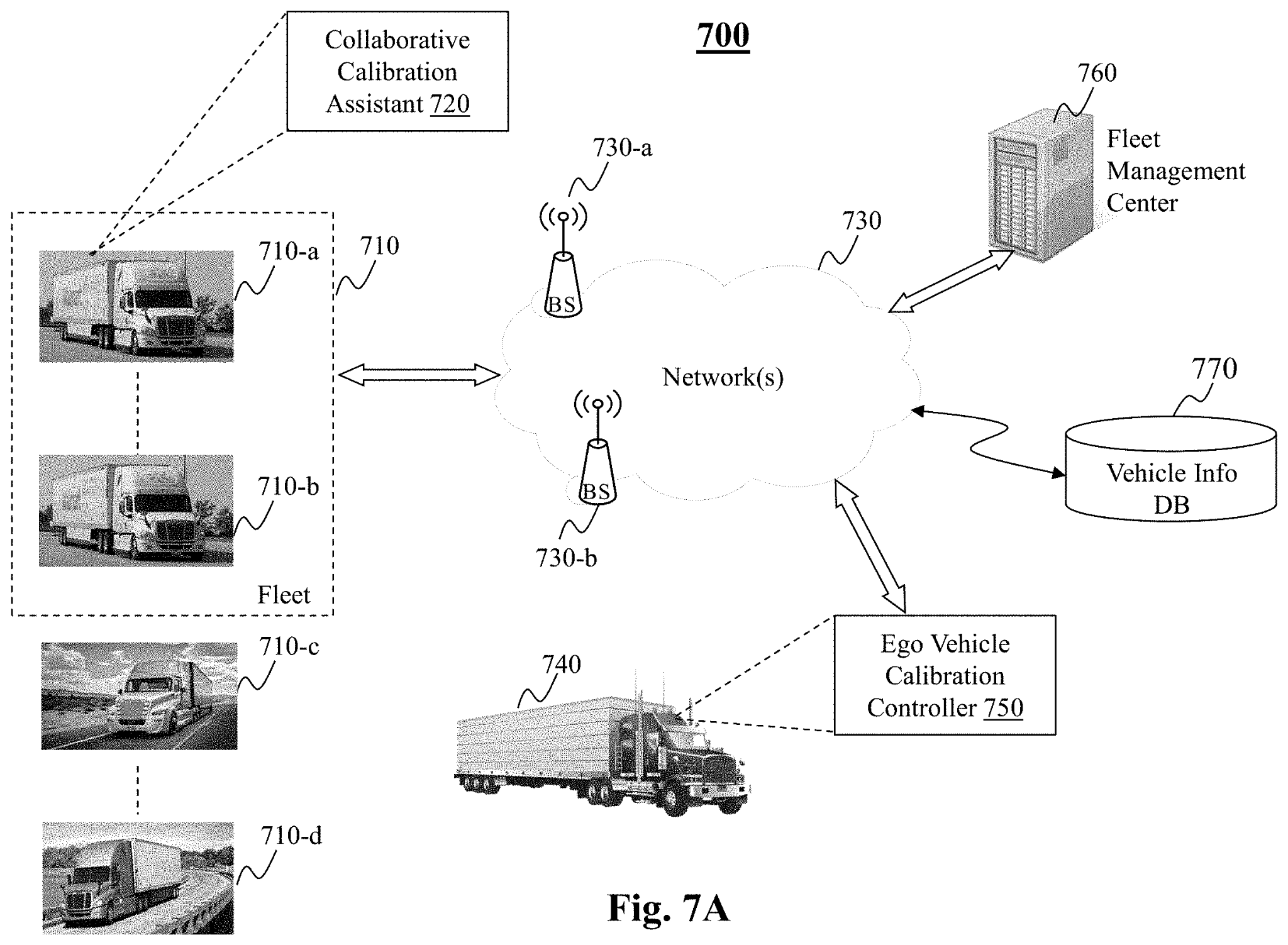

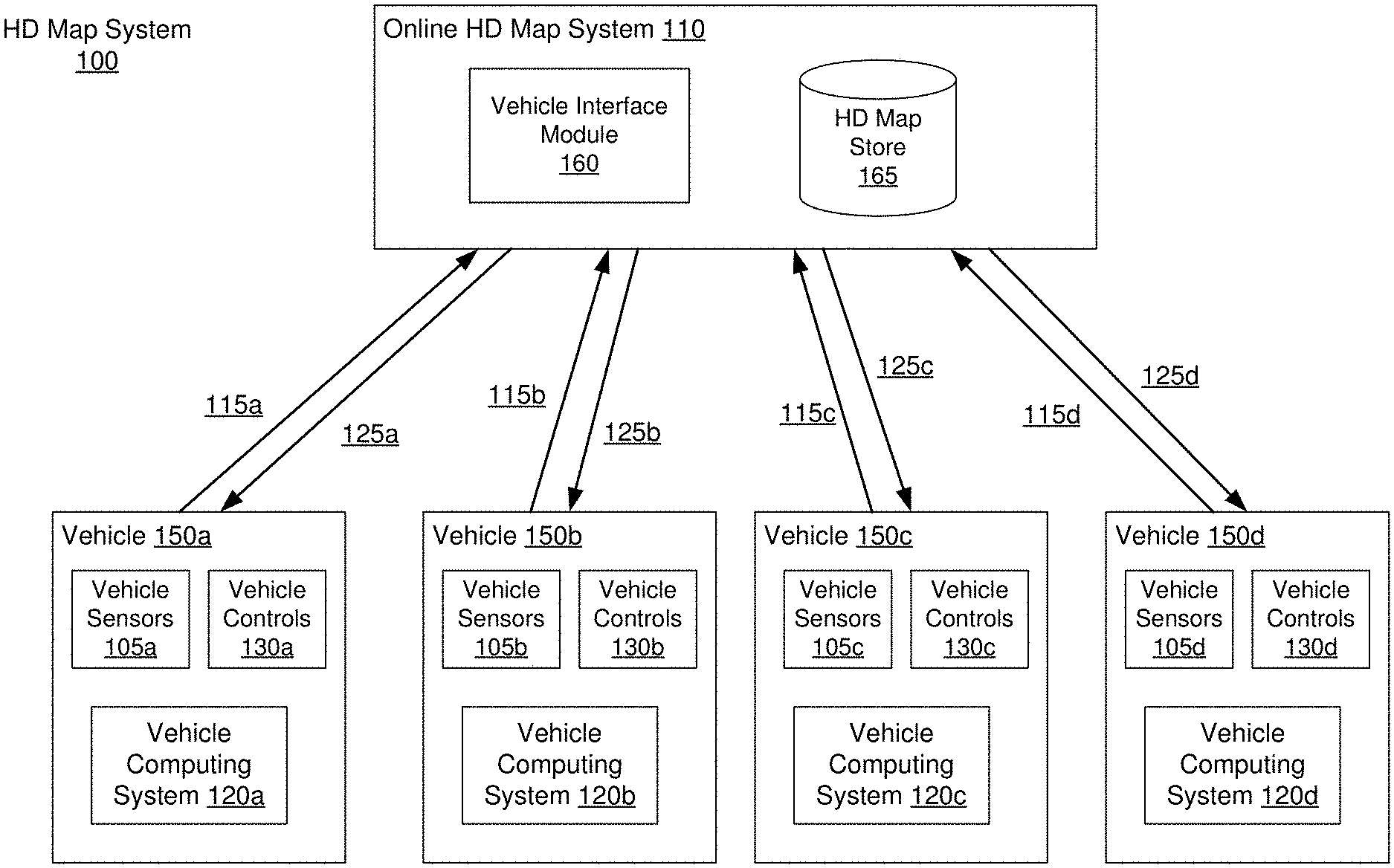

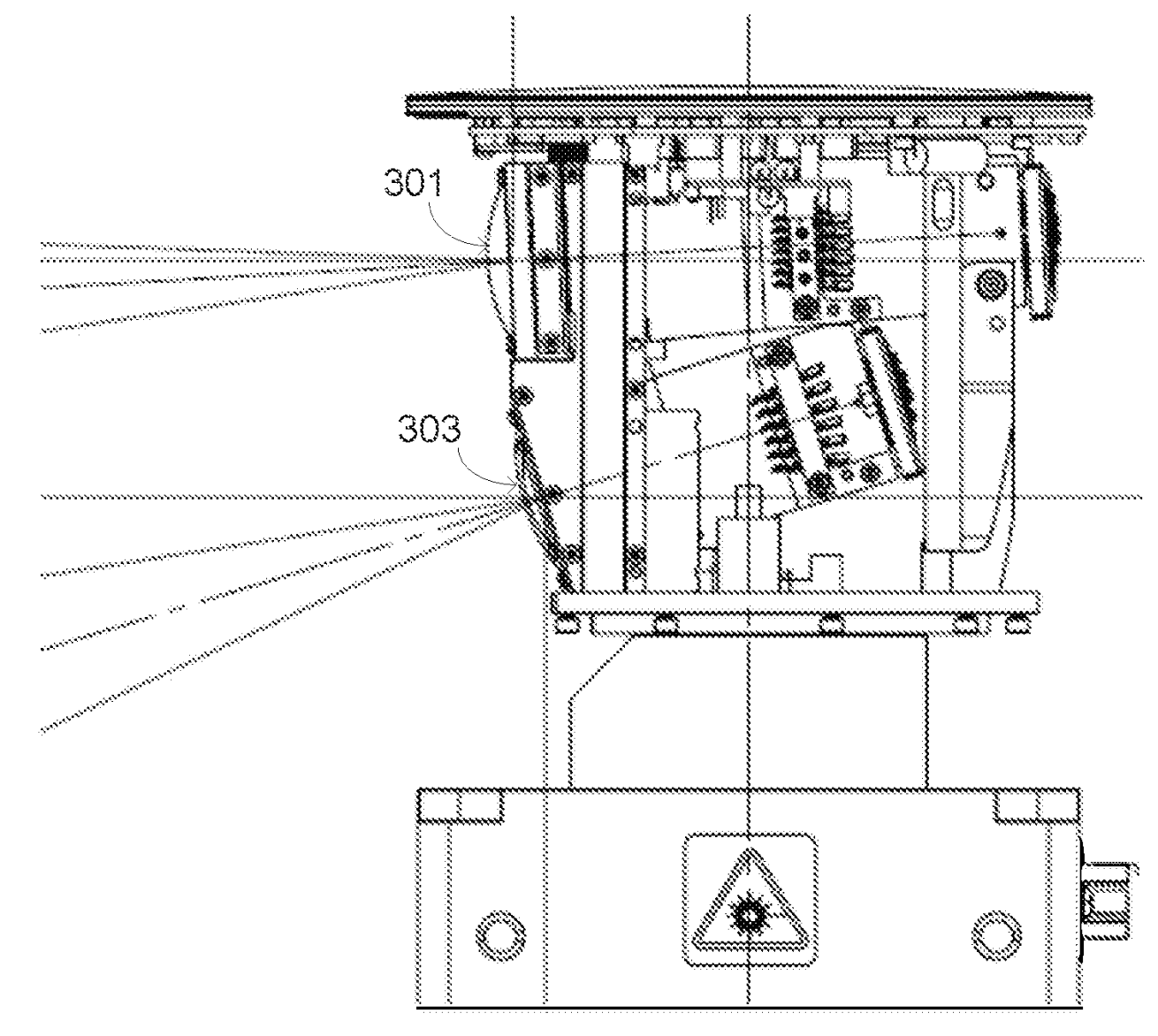

U.S. patent applications US20210172761A1 titled “System and Method for Collaborative Calibration via Landmark” and US20210174685A1 titled “System and Method for Coordinating Collaborative Sensor Calibration” assigned to PlusAI describes a collaborative sensor calibration method to facilitate autonomous driving needs of an ego vehicle (740) connected to assistant vehicles (710) via a remote center (760). When the ego vehicle receives a calibration assistant package, which includes information associated with a collaborative means present along the route to be used to assist the ego vehicle with calibrating its sensor, it identifies, based on the information, the collaborative means along the route when the ego vehicle is in the vicinity of the collaborative means in order to capture a target present on the collaborative means to enable calibration of the sensor.

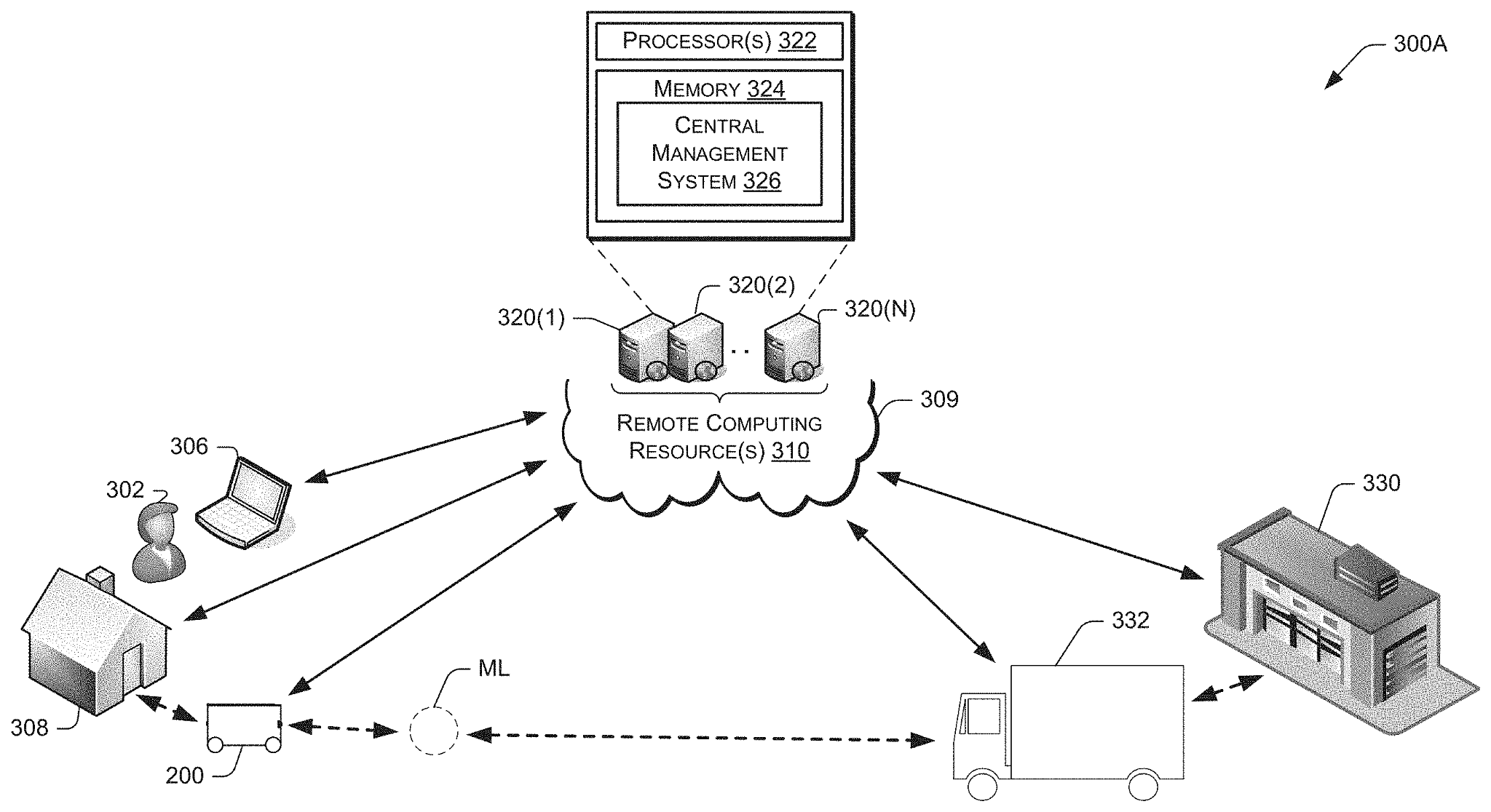

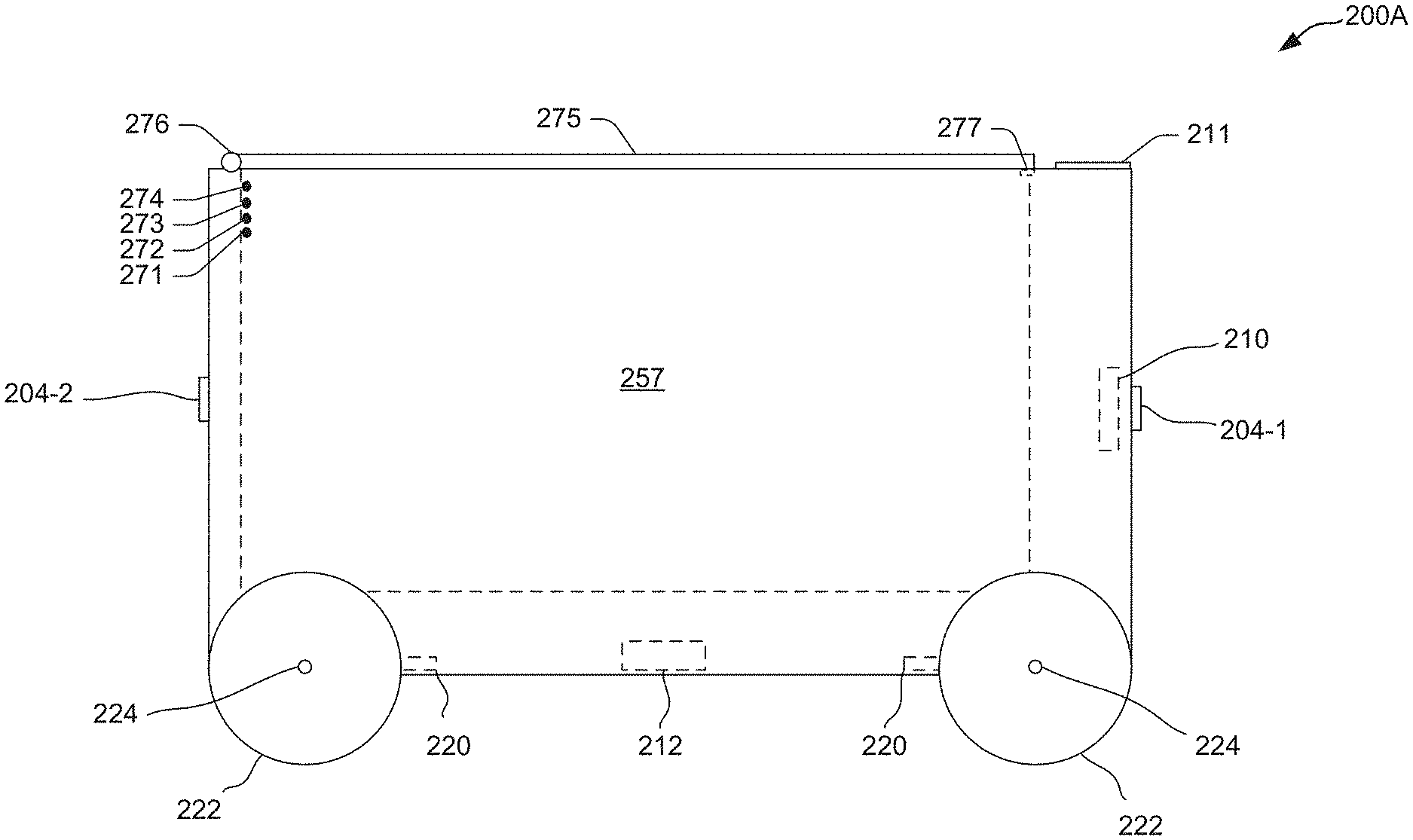

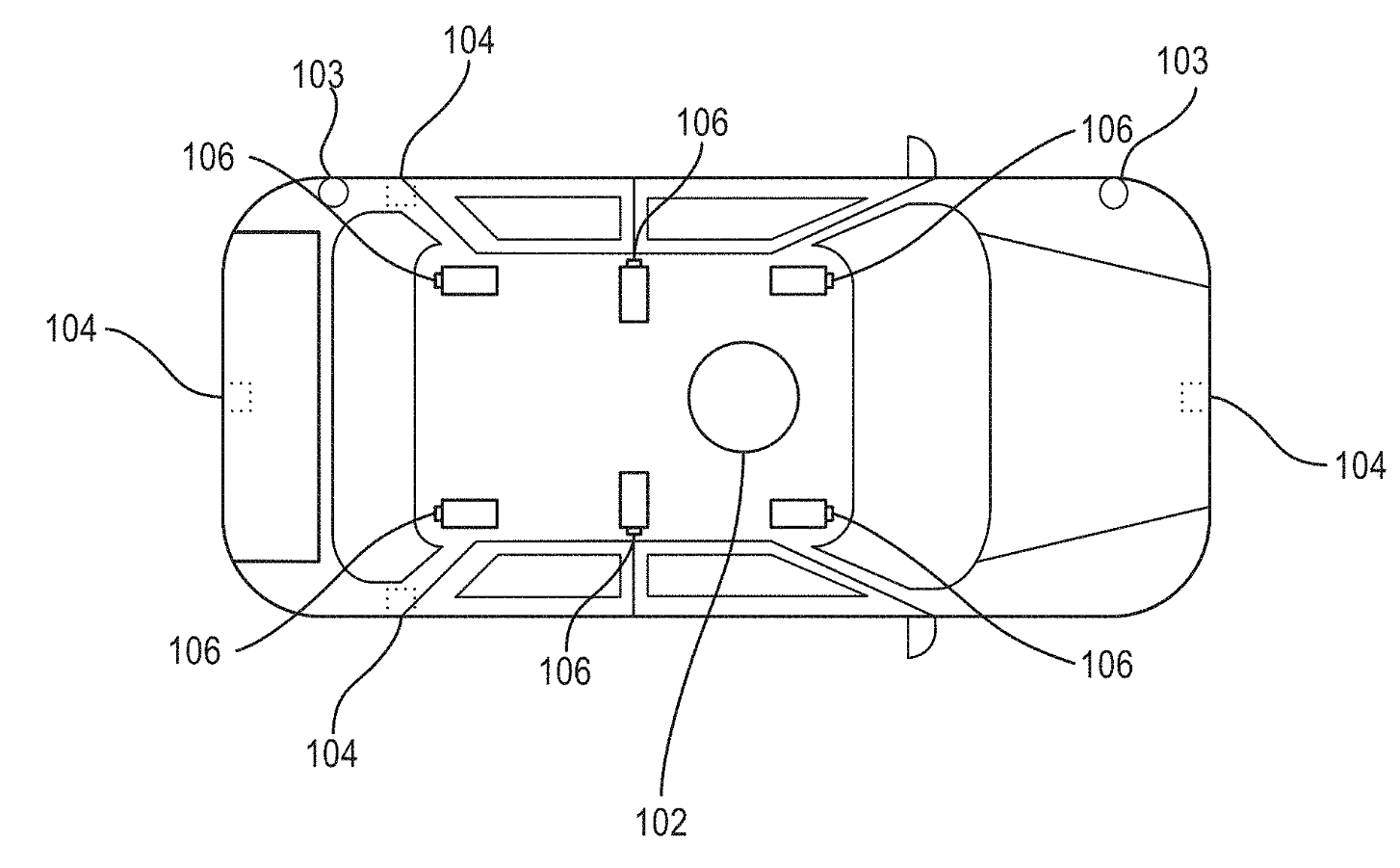

U.S. Patent Application US20210141377A1 titled “Autonomous Ground Vehicles Having Access Mechanisms and Receiving Items from Transportation Vehicles for Delivery” assigned to Amazon Technologies describes autonomous ground vehicles (“AGVs”) (200) that receive items from transportation vehicles (e.g., delivery trucks) (332) for delivery to specified locations (e.g., user residences, etc.). The AGVs may travel along travel paths to and from the delivery locations to deliver the items, and may be equipped with access mechanisms (e.g., which transmit remote control signals) to open and close access barriers (e.g., garage doors, etc.) that are encountered along the travel paths.

Velodyne to Focus on Small and Low-Cost Solid-State Lidar Sensors

Velodyne Lidar, the leading manufacturer of lidar sensors, has developed a product with a price tag as low as one-hundredth the price of other lidar sensors. This dramatic drop for these sensors at the heart of many autonomous car designs could speed up the evolution of self-driving vehicles. As the cost of lidar sensors decline, they can affordably be combined with cameras to create truly safe and affordable vision systems. Read more here.

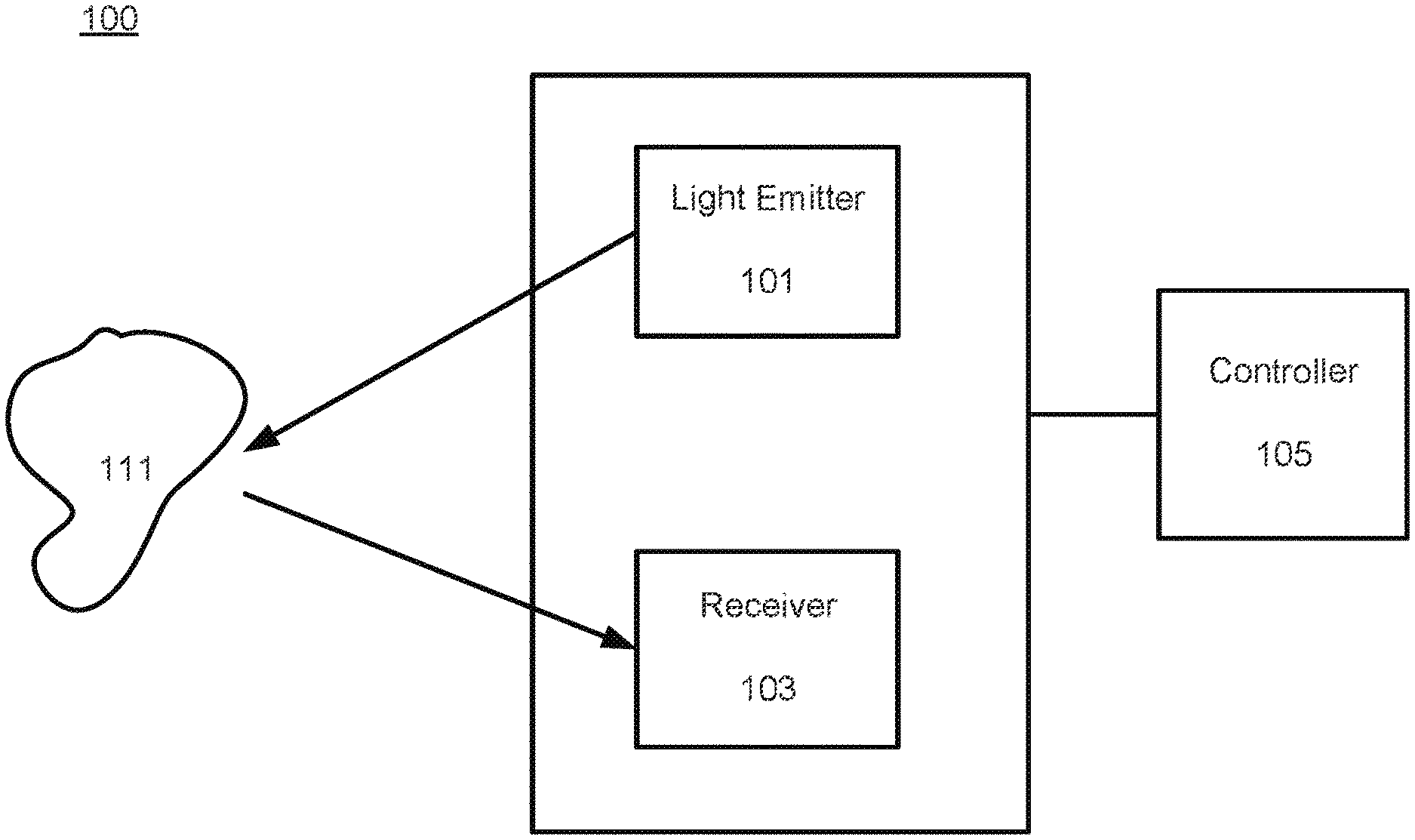

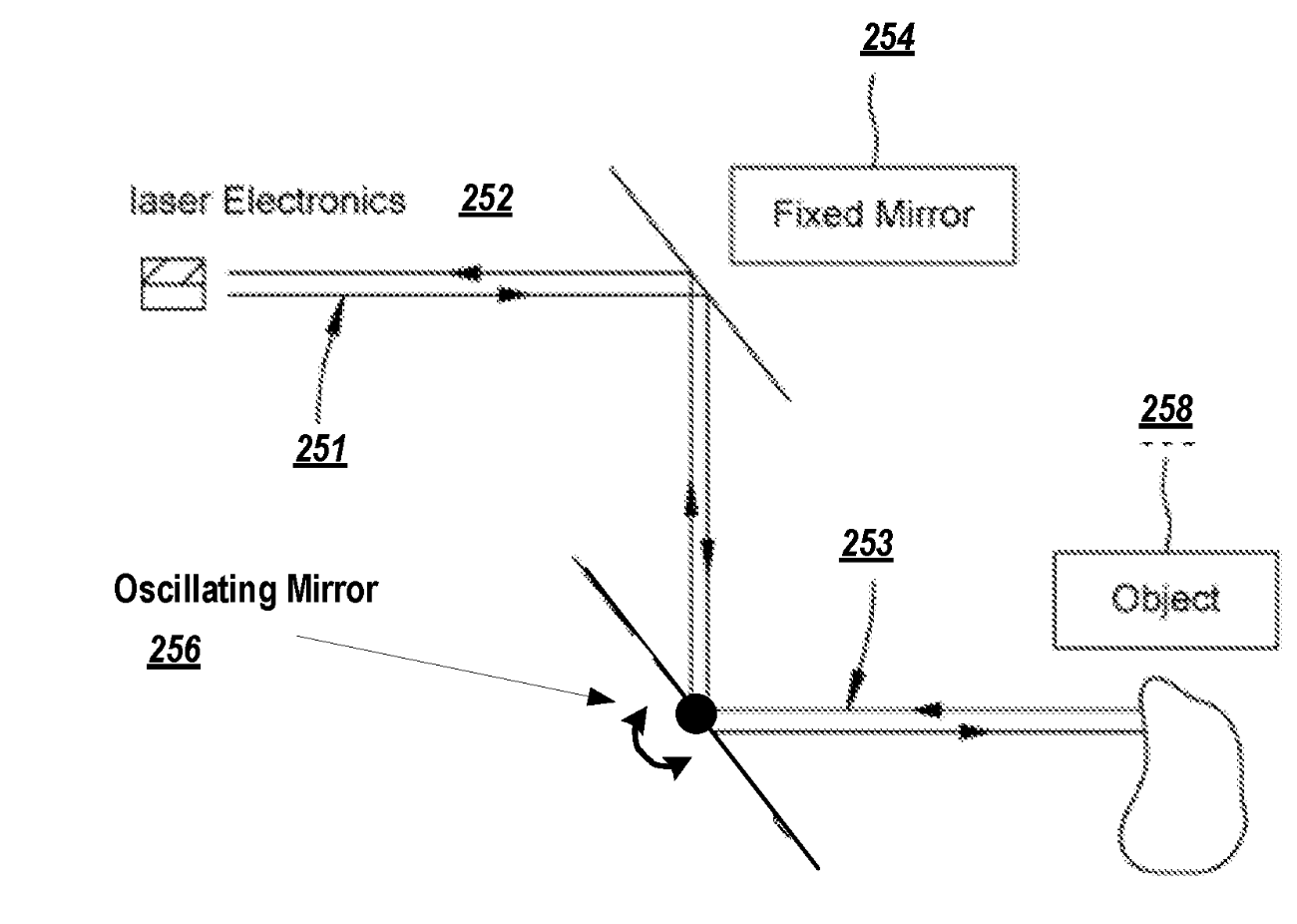

U.S. Patent application US20210003681A1 titled “Interference Mitigation for Light Detection and Ranging“ assigned to Velodyne Lidar describes dithering techniques that effectively reduce the impact of signal interferences in LIDAR sensors and systems in autonomous vehicles.The filtering subsystem is configured to receive the electrical signals from the receiver and determine whether there is a coherence between a point and corresponding neighboring points along at least a first direction and a second direction of the set of points.

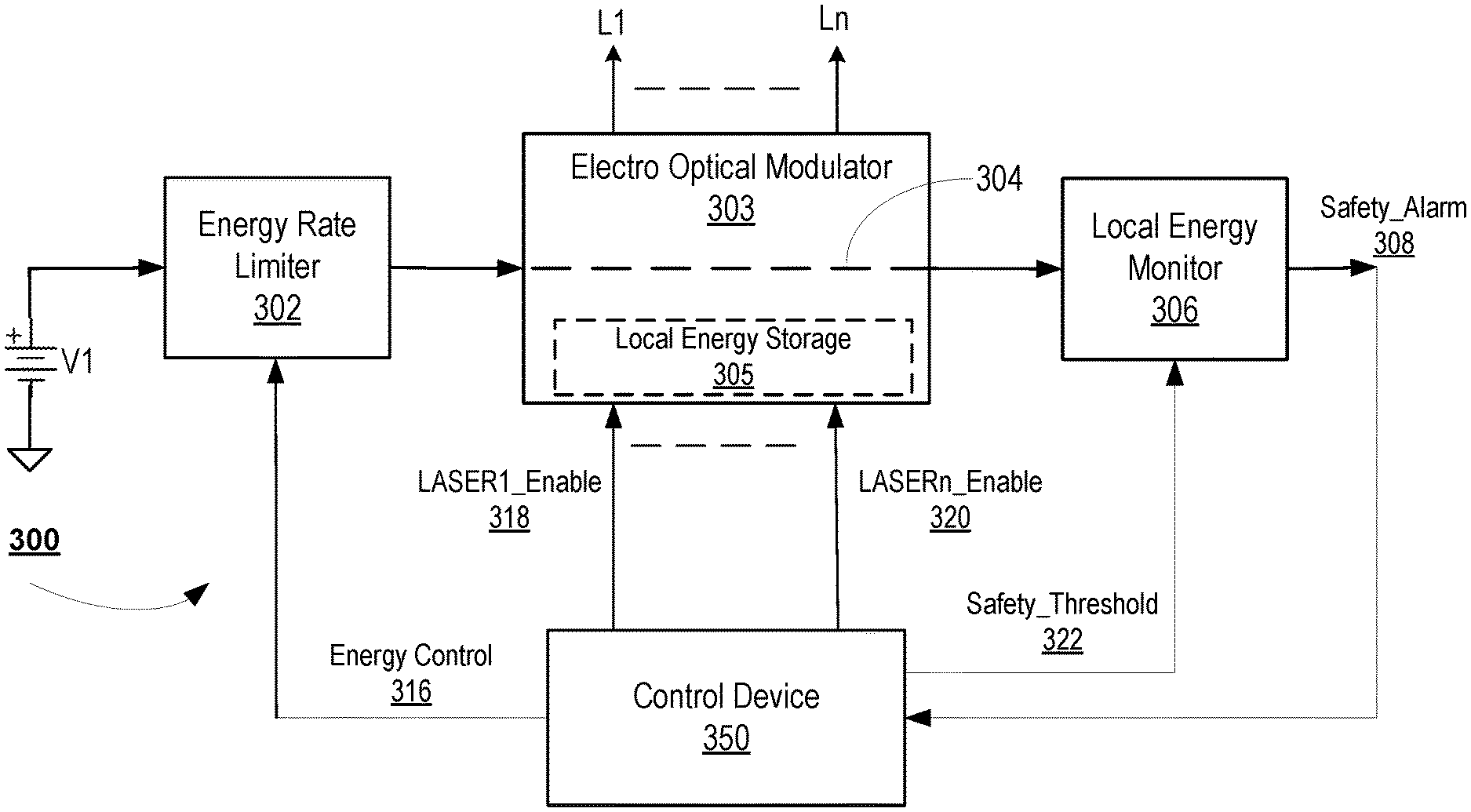

U.S. Patent application US20210041567A1 titled “Apparatus and Methods for Safe Pulsed Laser Operation“ assigned to Velodyne Lidar describes a device and method for protecting a user’s health during pulse laser operation and methods that can improve eye safety during expected and unexpected operating conditions of an optical system, such as a LiDAR (light detection and ranging) system. The system consists of an energy rate limiter which terminates the energy transfer from the power supply to the local energy storage module when the safety condition is violated.

NVIDIA to Acquire DeepMap Inc. to Strengthen its Autonomous Wing

NVIDIA is all set to buy DeepMap to extend its autonomous vehicle technology. The company plans to integrate the technology into its Drive platform to bolster mapping and localization capabilities. Drive technology is currently used by Mercedes-Benz, Hyundai, Audi, Volvo and others with varying degrees of features and complexity. NVIDIA said it expects to finalize the acquisition in Q3 2021. Read more here.

U.S. Patent US10897575B2 titled “Lidar to Camera Calibration for Generating High Definition Maps“ assigned to Deepmap describes the generation of high definition maps by combining data captured by different sensors, to improve the quality of maps generated and the efficiency of generating maps by a non-transitory computer readable storage medium storing instructions by performing calibration of sensors on a vehicle. The system uses a pattern (e.g. checkerboard) in the view and placed close to the vehicle to determine an approximate lidar-to-camera transform and then placed at a distance from the vehicle to determine an accurate lidar-to-camera transform.

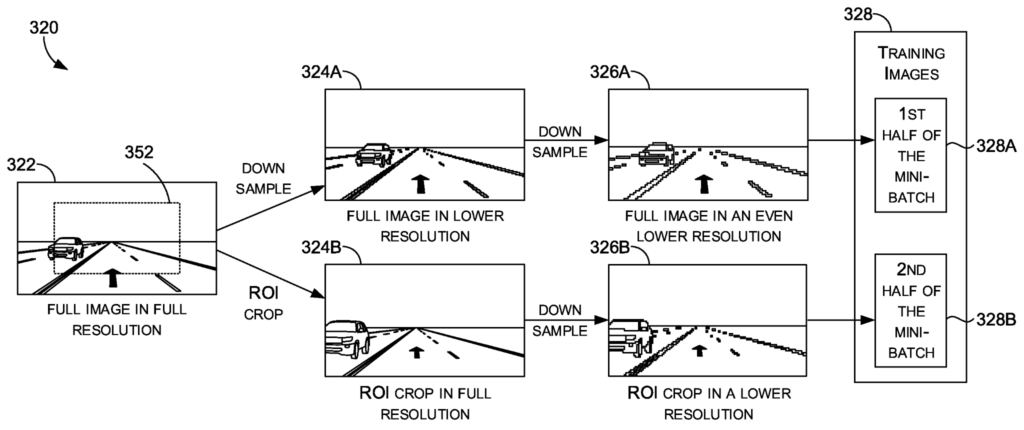

US Patent US10997433B2 titled “Real-Time Detection of Lanes and Boundaries by Autonomous Vehicles“ assigned to NVIDIA describes detecting, in real-time, lanes and road boundaries by using Deep Neural Networks (DNN). The DNN is designed to infer lane and boundary markers and to generate one or more segmentation masks (e.g., binary and/or multi-class) that may identify where in the representations (e.g., image(s)) of the sensor data potential lanes and road boundaries may be located.

Pony AI Develops Commercial Robotaxis

Robot taxi startup Pony.ai is eyeing a commercial vehicle launch by 2022 for its autonomous vehicles. Currently, the vehicles are in testing phases in the streets of California. Pony.ai also partnered with e-commerce platform Yamibuy to provide autonomous last-mile delivery service to customers in Irvine. It has already amassed a number of partners and more than $1 billion in funding, including $400 million from Toyota. Read more here.

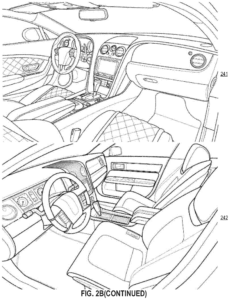

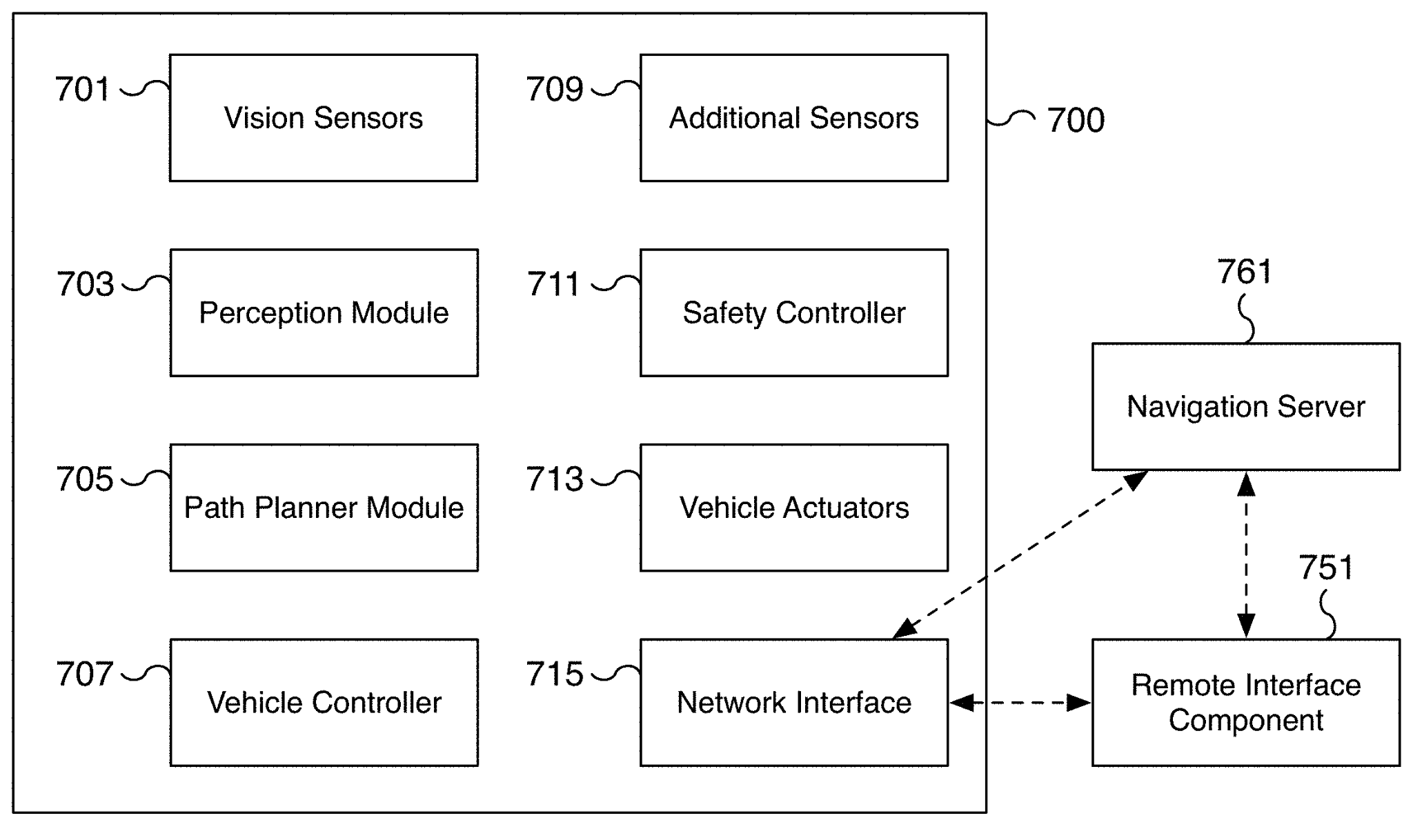

U.S. Patent application US20210123751A1 titled “User Preview of the Interior“ assigned to Pony AI describes autonomous vehicles (AVs) that provide ridesharing and taxi services. The robotaxi possess one or more driving characteristics such as cruising velocity of the vehicle, an acceleration rate of the vehicle, and a driving style or driving manner of the vehicle. A user or customer gets matched with robotaxi, based on user preferences of the interior of the robotaxi and user preferences of a driving manner. The vehicle includes myriad sensors (e.g., LiDARs, radars, cameras, etc.) to detect and identify objects in the surrounding area and a myriad of actuators, such as electro-mechanical devices or systems, to control the throttle response, braking action, and steering action, etc., of the vehicle. More interestingly the vehicle can recognize, interpret, and analyze road signs (e.g., speed limit, school zone, construction zone, etc.) and traffic lights (e.g., red light, yellow light, green light, flashing red light, etc.) and can adjust vehicle speed based on speed limit signs posted on roadways.

Tesla to Rely on Optical Camera & Network Computer for Autonomous Driving

Tesla has revealed its new supercomputer that allows the automaker to ditch radar and lidar sensors on self-driving cars in favor of high-quality optical cameras. The company is planning to get a computer to respond to a new environment in a way that a human can acquire an immense dataset. The massively powerful supercomputer will be used to train the company’s neural net-based autonomous driving technology. Hence the development of these predecessors to Dojo. Read more here.

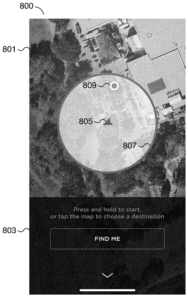

U.S. Patent application US20200257317A1 titled “Autonomous and User-Controlled Vehicle Summon to a Target“ assigned to Tesla, describes a technique for an autonomous vehicle to navigate to the target location provided by a user. To do that, the vehicle utilizes sensor data, such as vision data captured by cameras mounted behind a windshield, forward and/or side facing cameras mounted in the pillars, rear-facing cameras, etc. to generate a representation of the environment surrounding the vehicle. On identification of a geographical location associated with a target specified by a user remote from a vehicle, a machine learning model is utilized to generate a representation of at least a portion of an environment surrounding the vehicle using sensor data from one or more sensors of the vehicle. At least one command is provided to automatically navigate the vehicle based on the determined path and updated sensor data from at least a portion of the one or more sensors of the vehicle.

Click here to know more about our Search Services.